AI Ethics in Social Media: What Creators Need to Know

AI Ethics in Social Media: What Creators Need to Know

AI Ethics in Social Media: What Creators Need to Know

AI now drives what you see on social media, shaping content visibility and engagement. For creators, this brings both opportunities and challenges. Algorithms predict user behaviour, but they often reflect biases, lack transparency, and prioritise sensationalism over quality. This affects visibility, especially for smaller or niche creators, and raises ethical concerns.

Key takeaways:

- Algorithmic Bias: AI can unfairly suppress certain content, favouring mainstream or viral posts over niche creators.

- Lack of Transparency: Platforms rarely explain how algorithms work, leaving creators guessing about reach and penalties.

- Engagement vs. Quality: AI rewards clicks and shares, often at the expense of substance.

To navigate this, creators should:

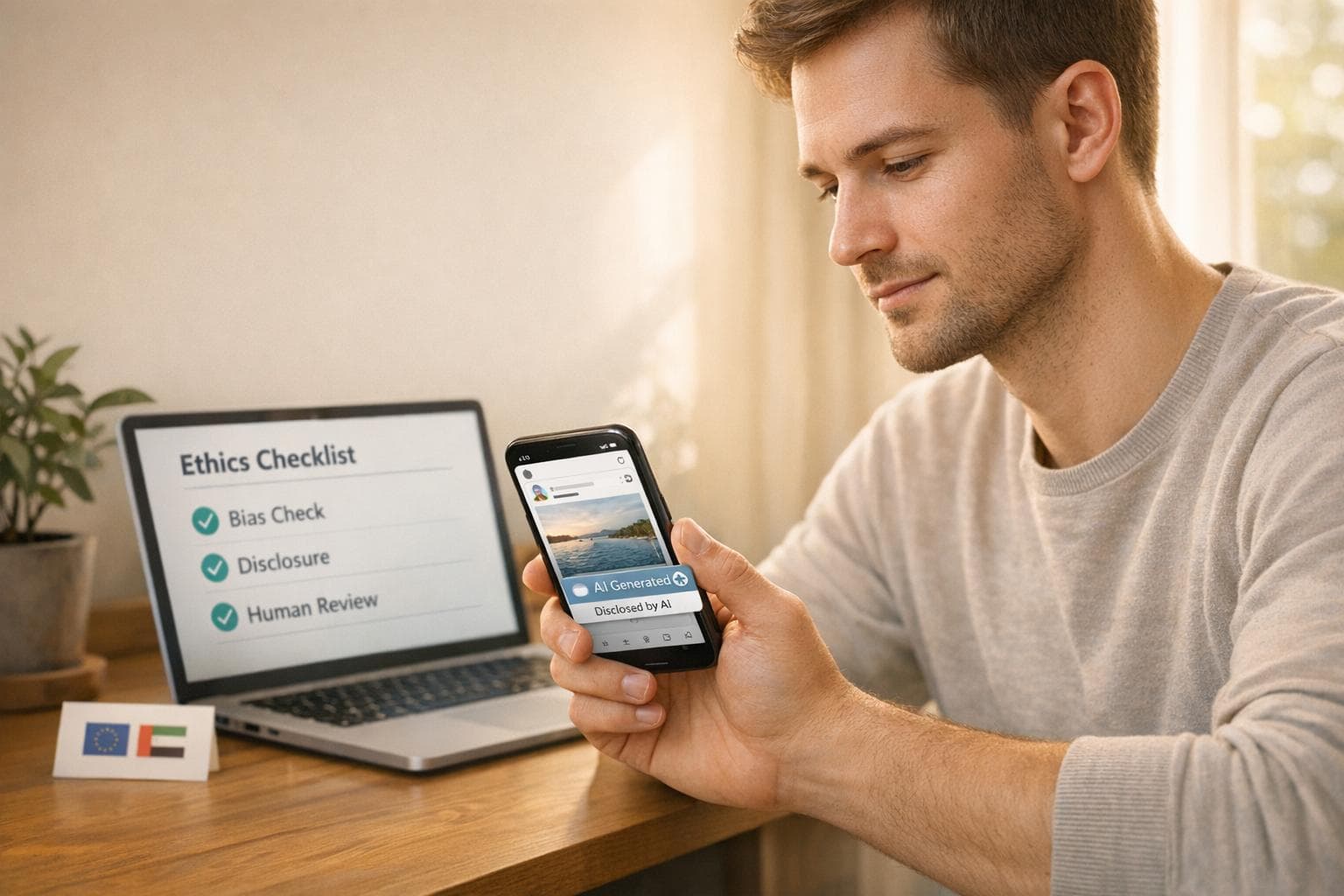

- Review AI Outputs: Ensure fairness and avoid stereotypes.

- Disclose AI Use: Platforms like YouTube and Meta now require clear labelling for AI-generated content.

- Leverage Ethical Tools: Use platforms like Posterly, which help maintain fairness and avoid penalties.

Additionally, new global regulations, like the EU AI Act (effective August 2026) and the UAE AI Act 2026, require creators to disclose AI involvement and ensure compliance with local standards. For UAE-based creators, this includes adhering to transparency notices, registering AI tools, and obtaining advertiser permits for sponsored content.

::: @figure  {AI Ethics in Social Media: Key Statistics and Impact on Content Creators}

:::

{AI Ethics in Social Media: Key Statistics and Impact on Content Creators}

:::

How Algorithmic Bias Affects Content Ranking

What Is Algorithmic Bias?

Algorithmic bias occurs when AI systems make decisions influenced by flawed data or poorly designed ranking criteria. Instead of simply ranking content based on quality, these systems predict viewer-specific outcomes - like how long someone might watch, how satisfied they’ll feel, or how likely they are to engage. As Mangesh Shahi from Spoclearn puts it:

"Algorithms don't 'rank content.' They rank predicted outcomes per viewer - watch time, satisfaction, meaningful interaction, and increasingly, trust" [5].

The problem arises when AI, trained on biased historical data, perpetuates stereotypes in its predictions. For example, a 2024 investigation by Schellmann and Mauro for The Guardian found that AI content-screening tools often rated everyday images of women - like those showing exercise or pregnancy - as more "sexually suggestive" than similar images of men. This led to unfair suppression of female creators' content, especially in fitness and lifestyle niches [6].

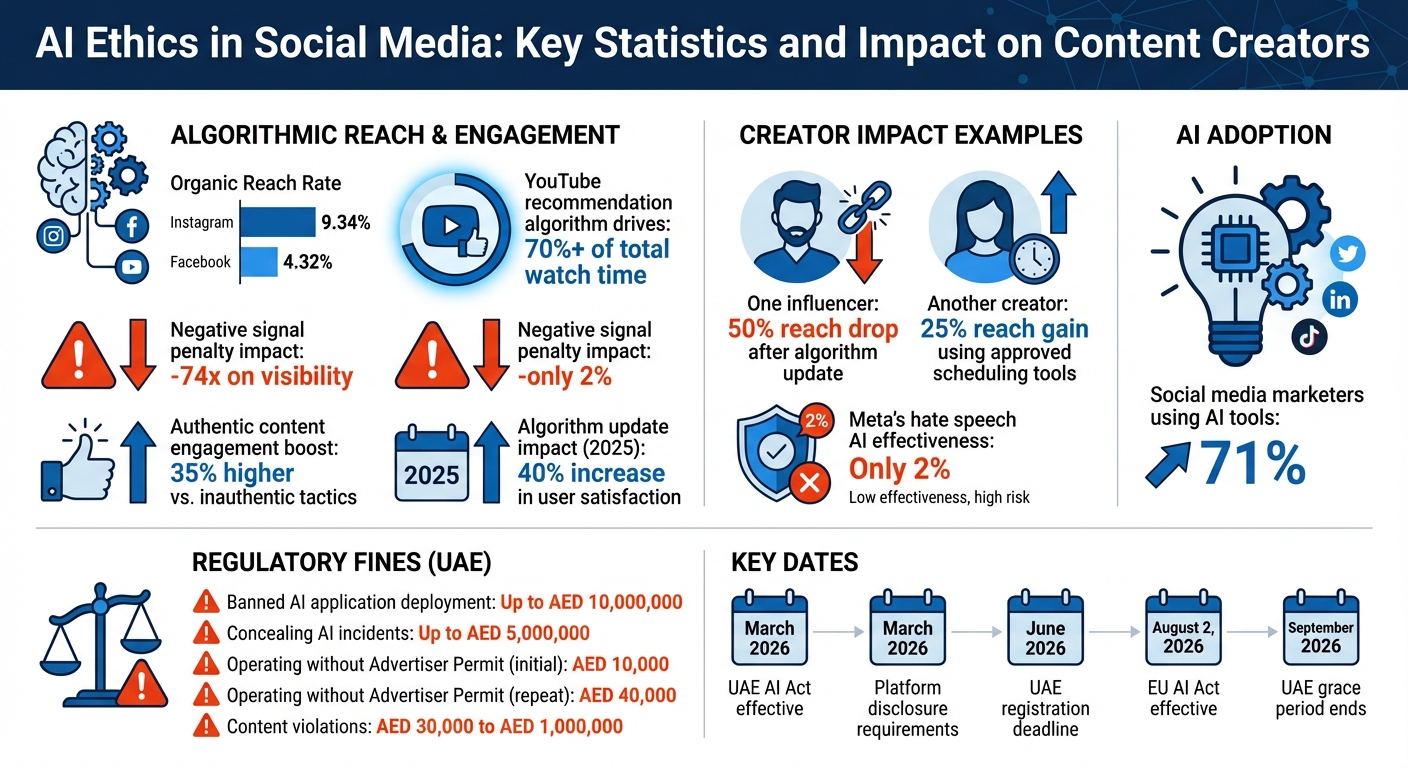

Engagement-driven metrics also prioritise sensational content over balanced or nuanced material. YouTube’s recommendation algorithm, for instance, is responsible for more than 70% of the platform’s total watch time [3]. This highlights how much control these systems have over what audiences consume, often amplifying biased content.

These biases don’t just affect content quality - they also influence creators' visibility and authority.

How Bias Impacts Creators

For creators, algorithmic bias creates significant hurdles in reaching their audiences. AI systems often favour mainstream, viral content, sidelining creators who focus on niche or community-driven topics. With Instagram's organic reach rate averaging around 9.34% and Facebook’s dropping to 4.32% [6], visibility is already a challenge. For newer or underrepresented voices, it’s even harder to gain traction.

The issue is compounded by metrics that favour established creators. Historical engagement and negative signals - like penalties for low-performing posts - can severely limit a creator’s reach. For instance, negative signals can have a disproportionate impact, such as a -74x penalty on visibility [4]. This system makes it nearly impossible for emerging creators to gain momentum without an existing audience.

Adding to the frustration is the lack of transparency in how these algorithms work. Meta’s AI systems for combating hate speech, for example, have been reported as only 2% effective [3]. Yet creators often see their reach reduced or content suppressed without clear explanations. In early 2025, one influencer saw their reach drop by 50% after an algorithm update, while another gained 25% simply by using approved scheduling tools [1]. These unpredictable swings can make or break a creator’s career, leaving many feeling powerless against the system.

sbb-itb-f7b3a33

Ethical Challenges of AI in Social Media

Lack of Transparency in AI Systems

Creators often find themselves grappling with opaque algorithms that seem to arbitrarily suppress or promote content. Social media platforms rarely explain the reasoning behind these decisions, leaving creators guessing where they may have gone wrong. This lack of clarity makes it incredibly difficult to build reliable strategies for success.

Algorithm updates only add to the unpredictability. Changes can drastically alter content visibility, often without warning. As Alex Ramirez, an AI SEO Specialist, puts it:

"The ethical landscape involves challenges in transparency, accountability, and defining 'fairness' in AI systems" [7].

A 2019 study by Northeastern University revealed troubling biases in Facebook's ad delivery system. Even when advertisers selected neutral targeting options, the system skewed housing and job ads based on demographic factors [7]. For creators, this lack of transparency means they can’t discern whether reduced reach is due to the quality of their work, algorithmic bias, or technical glitches.

In a 2025 study by Algorithm Watch, YouTube's recommendation system was found to disproportionately suggest conspiracy theory videos to users who had previously engaged with similar content [10]. This left creators of balanced, educational material struggling for visibility against more sensationalist content. Such opacity not only erodes trust but also opens the door to further ethical concerns in how content is amplified.

How AI Amplifies Harmful Content

AI systems don’t just rank content - they amplify it, often in problematic ways. Social media platforms prioritise content that keeps users engaged, which frequently means promoting outrage, fear, or controversy over meaningful dialogue. This puts creators in a difficult position, forcing them to choose between creating thoughtful content or chasing sensationalism.

The danger becomes even more apparent when AI amplifies misinformation. For instance, in March 2022, during the early days of the Ukraine invasion, a deepfake video of President Volodymyr Zelensky falsely urging troops to surrender circulated on social media and a hacked news station [2]. Although the video was swiftly removed, it highlighted how AI-generated media can spread harmful content on a massive scale.

But the issue isn’t limited to deepfakes. AI algorithms curate user feeds based on preferences, often creating echo chambers that reinforce biases and limit exposure to diverse viewpoints [10]. Mustafa Jamal Nasser, an AI ethics researcher, notes:

"AI algorithms are built and trained on large datasets, and the quality of these datasets directly impacts the AI's decision-making capabilities" [10].

As a result, content that challenges existing beliefs or offers nuanced perspectives is often overshadowed by material that simply confirms preexisting opinions.

Engagement Metrics vs. Content Quality

Another ethical dilemma arises from the way AI prioritises engagement metrics over content quality. Creators often face a tough choice: optimise their content to meet algorithmic standards or focus on delivering genuine value. Unfortunately, AI systems tend to reward content that drives clicks, likes, and shares - metrics that don’t always reflect accuracy or depth.

This pressure to chase metrics can lead to compromises. Efe Onsoy, CEO of MoreThanPanel, explains:

"Ethical AI has become a competitive advantage, rather than a choice" [8].

Creators may feel compelled to dilute their message or adjust their style to align with unclear platform standards [6].

However, there’s evidence that authenticity can still succeed. Accounts that avoid aggressive automation often see 35% higher engagement compared to those relying on inauthentic tactics [9]. In 2025, algorithm updates that prioritised original content over spam led to a 40% increase in user satisfaction [1]. While these changes suggest platforms are beginning to address the issue, the core conflict remains: AI continues to favour engagement metrics over substance, leaving creators to navigate the difficult balance between what algorithms reward and what audiences genuinely need.

How Creators Can Navigate AI Ethics

Creating Content That Includes Everyone

Making content that resonates with everyone starts by acknowledging a key challenge: AI systems often rely on training data that doesn't fully represent all groups. This can lead to biased outputs. To counteract this, creators should prioritise tools developed with diverse datasets and actively review AI-generated content for fairness and inclusivity.

Human involvement plays a crucial role here. Regularly review AI outputs to ensure they reflect a range of perspectives. Go a step further by seeking feedback from communities that might be underrepresented in your content. This ensures accuracy and demonstrates respect.

You can also use tools designed to identify and address bias, such as debiasing software. When working on AI-generated images or videos, be intentional about including references to different cultures, regions, and viewpoints. This helps avoid a default to narrow or stereotypical representations [14].

By embedding inclusivity into your process, you not only improve your content but also uphold the transparency that should guide all AI use.

Being Transparent About AI Use

Transparency isn't just a good practice anymore - it's becoming a requirement. By March 2026, major platforms will enforce rules for disclosing AI-generated content. For example, YouTube will require creators to toggle a disclosure option for realistic altered content, while Meta will mandate labelling for photorealistic videos or audio before they go live [13].

Being upfront builds trust. Simple labels like "AI-generated image" or using hashtags such as #CreatedWithAI make it clear when technology is involved [11][13]. As Efe Onsoy, CEO of MoreThanPanel, puts it:

"The key factor of whether AI boosts expansion or destroys trustworthiness is transparency, originality, and moral accountability" [8].

The best creators strike a balance - using AI for efficiency but adding their personal touch. For instance, they refine AI-generated captions and include their own insights rather than relying on unedited machine outputs [8][11].

By pairing transparency with ethical practices, creators can strengthen their audience's trust while broadening their reach - an approach that platforms like Posterly actively support.

Using Posterly for Ethical Content Creation

Posterly offers tools designed to help creators produce content that aligns with ethical standards. Its features are built to avoid penalties like shadowbans while ensuring fairness in how your content is distributed. For example, Posterly’s smart scheduling mimics natural posting patterns, and its predictive analytics suggest topics that are likely to engage your audience - all while keeping authenticity at the forefront [9][12].

The platform also integrates advanced tools like Nano Banana Pro for image creation and Veo for video generation. These features allow creators to produce high-quality visuals efficiently, but with full control over the final output. This ensures that every piece of content is reviewed and ethically sound before it’s published.

Legal and Regulatory Considerations

Global AI Regulations

AI regulations are reshaping content creation globally. The EU AI Act, effective from 2 August 2026, applies to anyone whose content reaches EU audiences, regardless of location [16][17]. Under Article 50, creators - referred to as "deployers" - must clearly disclose deepfakes and AI-generated text, especially on public interest topics [17][18].

The law distinguishes between providers (those developing AI systems) and deployers (those using them). Creators must label AI-generated content using methods like machine-readable watermarks or metadata that persist even after cropping or compression [17]. However, there's an exception: if AI-generated content undergoes extensive human review - including fact-checking, rewriting, and final approval - it can be classified as human-led. Melissa Blevins from Dragonfly Editorial explains:

"When AI-generated text undergoes substantial human review - fact-checking, rewriting, judgement calls, and final approval - responsibility shifts to the human author... content is generally treated as human-led" [16].

Outside the EU, 15 US states have enacted AI transparency laws as of Q2 2026 [15]. California and Texas require synthetic media labels in advertisements or elections, while New York mandates AI disclosure for influencers with over 100,000 followers [15]. Meanwhile, China's 2026 regulations demand invisible watermarks in AI-generated media, detectable only by regulatory tools [15].

To stay compliant, creators should log metadata - including prompts, seeds, and timestamps - for every AI-generated asset. This practice isn’t just advisable; it’s what auditors will expect [15]. And keep in mind: EU regulations apply based on where content is consumed, not where it’s created [16].

These global frameworks are paving the way for region-specific rules, including those in the UAE.

AI Ethics in the UAE

The UAE has introduced its own AI framework, the UAE AI Act 2026, which came into effect in March 2026. This regulation uses a four-tier risk classification system that balances practicality with oversight [19]. For most creators, work will fall under Tier 1 (Minimal Risk) or Tier 2 (Limited Risk) categories. For instance, content recommendation systems fall under Tier 1 and require only a transparency notice, while Tier 2 tasks - like generating videos with tools such as Veo or images with Nano Banana Pro - require registration and annual reporting to the UAE AI Authority [19].

Violating these rules can lead to hefty penalties. Deploying banned AI applications can result in fines up to AED 10,000,000, while concealing AI-related incidents may incur fines of up to AED 5,000,000 [19]. The Act also prohibits systems like social scoring, unauthorised emotion recognition, and subliminal manipulation [19]. As the UAE Minister of AI, Digital Economy & Remote Work Applications stated:

"The UAE AI Act establishes a global benchmark for AI governance. By creating clear, proportionate rules, we are giving businesses the certainty they need to invest in AI while ensuring that innovation serves humanity" [19].

If you're publishing sponsored or promotional content in the UAE, you’ll need an Advertiser Permit from the UAE Media Council. This permit is free for UAE citizens and residents for the first three years [22][21]. Operating without one carries an initial fine of AED 10,000, which increases to AED 40,000 for repeated violations [22][21]. Permit numbers must be displayed on social media profiles and within sponsored posts [22][20].

Content creators must also adhere to 20 mandatory standards outlined in Federal Media Law No. 55 of 2023, ensuring alignment with UAE values, social harmony, and respect for religion [21]. Violations, such as disrespecting religion or national unity, can lead to fines ranging from AED 30,000 to AED 1,000,000 [20][21]. Before using AI tools for content automation, verify that your training data and prompts comply with these standards [20][21].

The UAE has provided a six-month grace period, starting in March 2026, with full enforcement beginning in September 2026 [19]. Use this time to review your AI tools, classify them by risk tier, and complete any necessary registrations for Tier 2 or higher systems. The deadline for registration and self-assessment is June 2026 [19].

The Impact of AI on Marketing, Ethics, and Creative Content with Avery Swartz

::: @iframe https://www.youtube.com/embed/iJSBMTtAF48 :::

Conclusion

Ethical AI use in social media isn’t just about following rules - it’s about creating long-term success for content creators. Studies show that authentic and original content consistently leads to higher engagement rates[9][1]. Social media platforms have made it clear through their algorithms, and audiences continue to reward creators who prioritise genuine, relatable content.

AI can be a powerful tool, but it works best when paired with human creativity. Use AI for tasks like scheduling posts or drafting content, but always follow up with a personal review. Adding even a short, original sentence or a specific example can help retain your unique voice and make your content stand out[23].

Transparency is another key to maintaining trust. Clearly disclose when AI is involved in creating content to stay aligned with ethical standards and new guidelines[11][23]. Tools like Posterly make this easier by offering features like API-compliant scheduling, brand voice consistency, and client approval workflows to ensure quality before publishing[24]. Its seamless integration with Nano Banana Pro for images and Veo for video creation allows you to use advanced AI while keeping full control over your final content.

To stay ahead, regularly evaluate your AI tools, categorise them by risk, and create workflows that balance automation with authenticity. With 71% of social media marketers already using AI tools[23], the creators who thrive will be those who use them responsibly - focusing on genuine engagement, fact-checking, and safeguarding their audience’s trust. By combining ethical AI practices with thoughtful human oversight, creators can build trust and achieve lasting success in a fast-changing digital world.

FAQs

::: faq

How can I tell if the algorithm is biased against my content?

To spot potential bias affecting your content, keep an eye on visibility and engagement metrics over time. If your posts consistently get fewer impressions, likes, or shares compared to similar content, it could signal an issue. Sudden declines after including certain keywords or experimenting with specific content types might also hint at bias.

Make sure your content aligns with the platform's guidelines to avoid unnecessary penalties. Tools like Posterly can help you track trends, identify problem areas, and fine-tune your strategy effectively. :::

::: faq

What qualifies as “AI-generated” content that needs disclosure?

AI-created content that needs disclosure includes anything developed, altered, or improved with artificial intelligence. This could involve text, images, audio, or video. For example, materials generated using tools like Posterly’s Nano Banana for visuals or Google Veo for videos must be clearly identified as AI-produced. Being upfront about this not only aligns with changing regulations but also helps avoid fines and fosters trust with your audience by maintaining ethical transparency. :::

::: faq

What must UAE creators do to comply with the UAE AI Act 2026?

From 1 February 2026, all creators in the UAE will need to secure a valid Advertiser Permit from the UAE Media Council to align with the UAE AI Act 2026. This requirement applies to any online promotional content, whether it’s paid or unpaid. Make sure you have your permit in place to avoid falling foul of these new rules. :::

Related Articles

How to Create 30 Days of Social Media Content Using AI Images in posterly

A practical guide to generating a full month of original social media images with posterly's AI image tool, writing captions for each one, and scheduling everything in a single session.

Read moreHow to Find the Best Time to Post on Social Media Using posterly's AI Scheduler

Stop guessing when to post. This guide explains how posterly's AI Scheduler analyses your audience activity and automatically picks the best posting times so your content gets seen by more people.

Read moreHow to Manage Google Business Reviews on Autopilot With posterly

A step-by-step guide to responding to Google reviews with AI, automatically requesting new reviews from customers, auditing your profile for local SEO, and turning your best reviews into social media content.

Read moreHow to Manage Multiple Client Social Media Accounts With posterly Workspaces

A practical guide for agencies and teams on using posterly Workspaces to organise client accounts, assign roles, get content approved, and schedule posts across dozens of social profiles without things getting messy.

Read more